External Compute

This chapter discusses External Compute - one of Stardog’s features for pushing heavy workloads to compute platforms like Databricks. This page primarily discusses what is external compute, how it works, and what are the supported operations. See the Chapter Contents for a short description of what else is included in this chapter.

Page Contents

Overview

Stardog supports operations where the workload can be pushed to external compute platforms.

The Supported Compute Platform is Databricks.

Supported Operations are:

- Virtual Graph Materialization using

virtual-importCLI command and SPARQL Update Queries (add/copy) - Virtual Graph Cache using

cache-createandcache-refreshCLI commands

How it Works

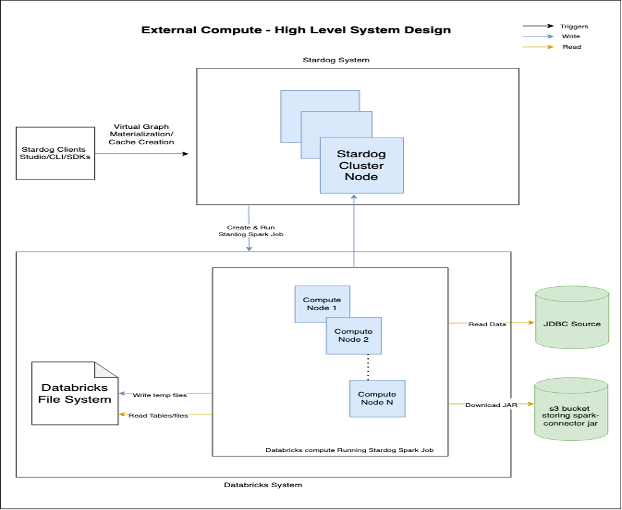

Stardog converts the supported operation into a Spark job, connects to the external compute platform, uploads the spark-connector.jar, and then creates and triggers the Spark job. This Spark job does the required computation on the external compute platform and then connects back to the Stardog server (using the credentials of the user that triggered the operation) to write the results to Stardog.

Architecture

The high level architecture for external compute is as shown:

Chapter Contents

- Databricks Configuration - discusses configuring Databricks as an external compute platform in Stardog.

- Virtual Graph Materialization - discusses how to materialize Virtual Graphs using external compute platform in Stardog